Day 19: Performance Analytics Engine - Turning Quiz Data Into Learning Insights

What We're Building Today

Today we're creating the brain that makes sense of all those quiz scores from yesterday's lesson. Here's what you'll have by the end:

High-Level Build Agenda:

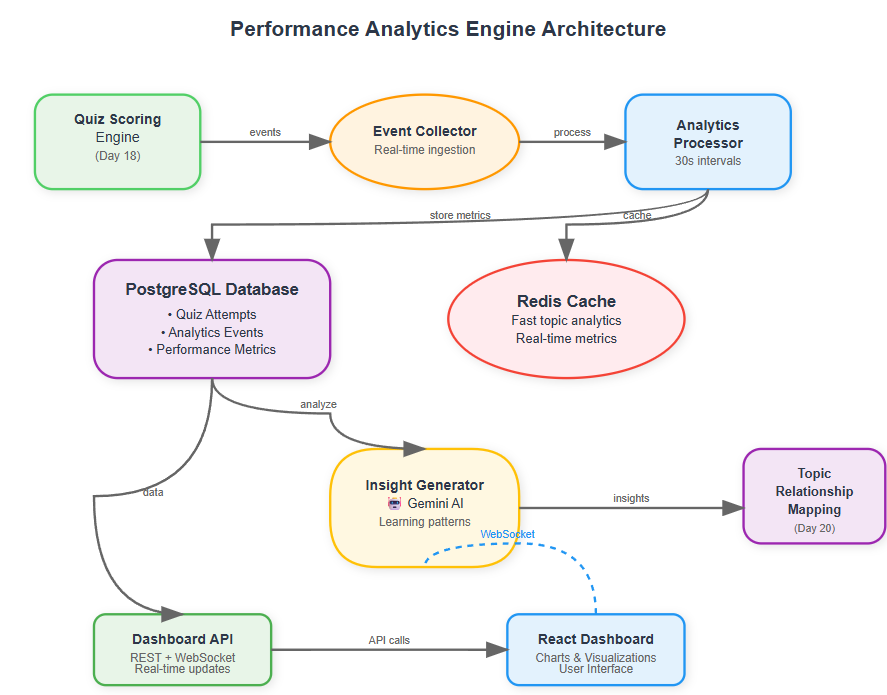

Real-time analytics processing service that crunches quiz performance data

AI-powered insight generator using Gemini for personalized learning recommendations

Live dashboard with charts showing learning trends and patterns

WebSocket connections for instant updates as students take quizzes

Integration bridge connecting Day 18's scoring engine to Day 20's topic mapping

Think of it as building the "Netflix recommendation engine" but for learning - analyzing patterns, spotting knowledge gaps, and predicting where students need help next.

Why Performance Analytics Matter in AI Systems

Ever wondered how Khan Academy knows exactly which math concept you're struggling with? Or how Duolingo decides when to review vocabulary you learned weeks ago? That's performance analytics at work.

In production AI systems handling millions of learners, analytics engines are the difference between generic education and personalized learning experiences. They transform raw interaction data into intelligence that drives better outcomes.