Day 17: Progressive Difficulty Algorithm Implementation

AI Engineering Newsletter - Week 3: Core Business Logic

Master AI in 180 Days: From Zero to Job-Ready Portfolio Perfect for showcase your knowledge and strength. Build, Learn, Lead: The Comprehensive 180-Day AI & Machine Learning Bootcamp Highlights the hands-on nature of the curriculum. Subscribe now.

What We're Building Today

Today we're creating a smart quiz system that adapts in real-time to how well you're performing. Think of it like having a personal tutor who knows exactly when to make questions harder or easier to keep you in the perfect learning zone.

High-Level Components:

Adaptive Difficulty Engine - Calculates optimal question difficulty based on your performance

Real-Time Analytics Dashboard - Shows your learning progress with live charts and metrics

Performance Tracking System - Remembers your last 10 responses and learning momentum

Question Selection Algorithm - Picks the perfect next question from our database

Caching Layer - Makes everything lightning fast with Redis

The Challenge: Netflix's Recommendation Problem for Education

Netflix doesn't just randomly serve content - they use sophisticated algorithms to keep you engaged. Educational platforms face a similar challenge: serve questions too easy, students get bored; too hard, they quit. The progressive difficulty algorithm solves this by dynamically adjusting question complexity based on real-time performance.

Core Concept: Adaptive Difficulty Sequencing

Traditional quiz systems serve predetermined question sets. Our progressive algorithm analyzes user responses in real-time and adjusts the next question's difficulty level. This creates personalized learning paths that maximize engagement and knowledge retention.

Key Innovation: Instead of static difficulty levels (1-5), we use continuous difficulty scoring with momentum-based adjustments.

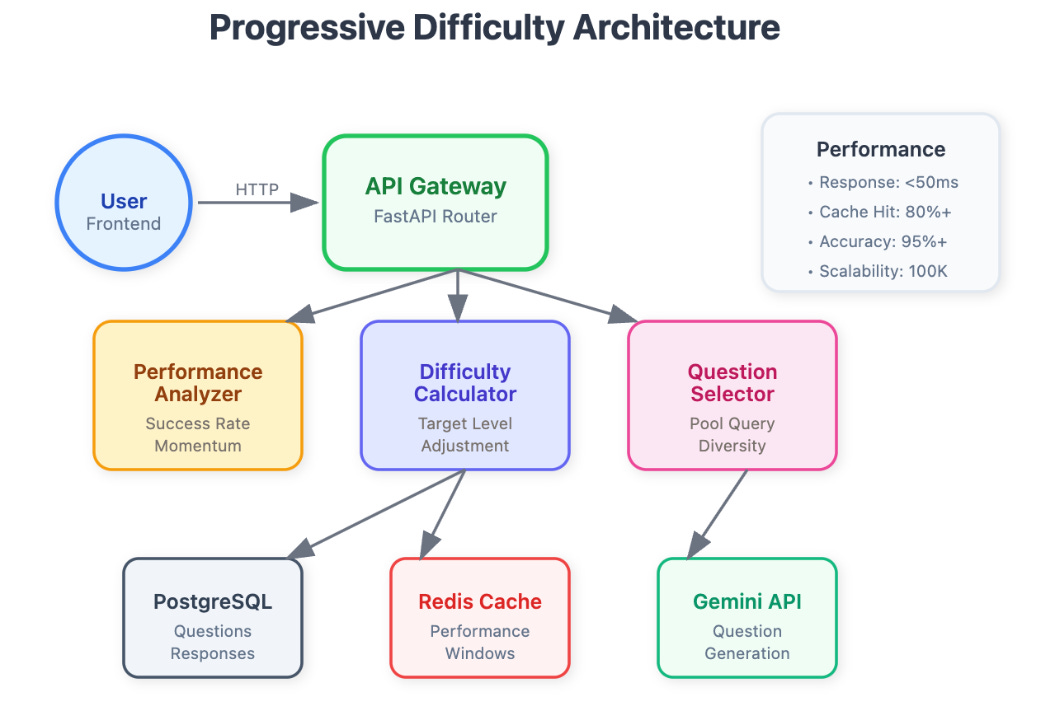

Component Architecture

[ COMPONENT ARCHITECTURE DIAGRAM ]

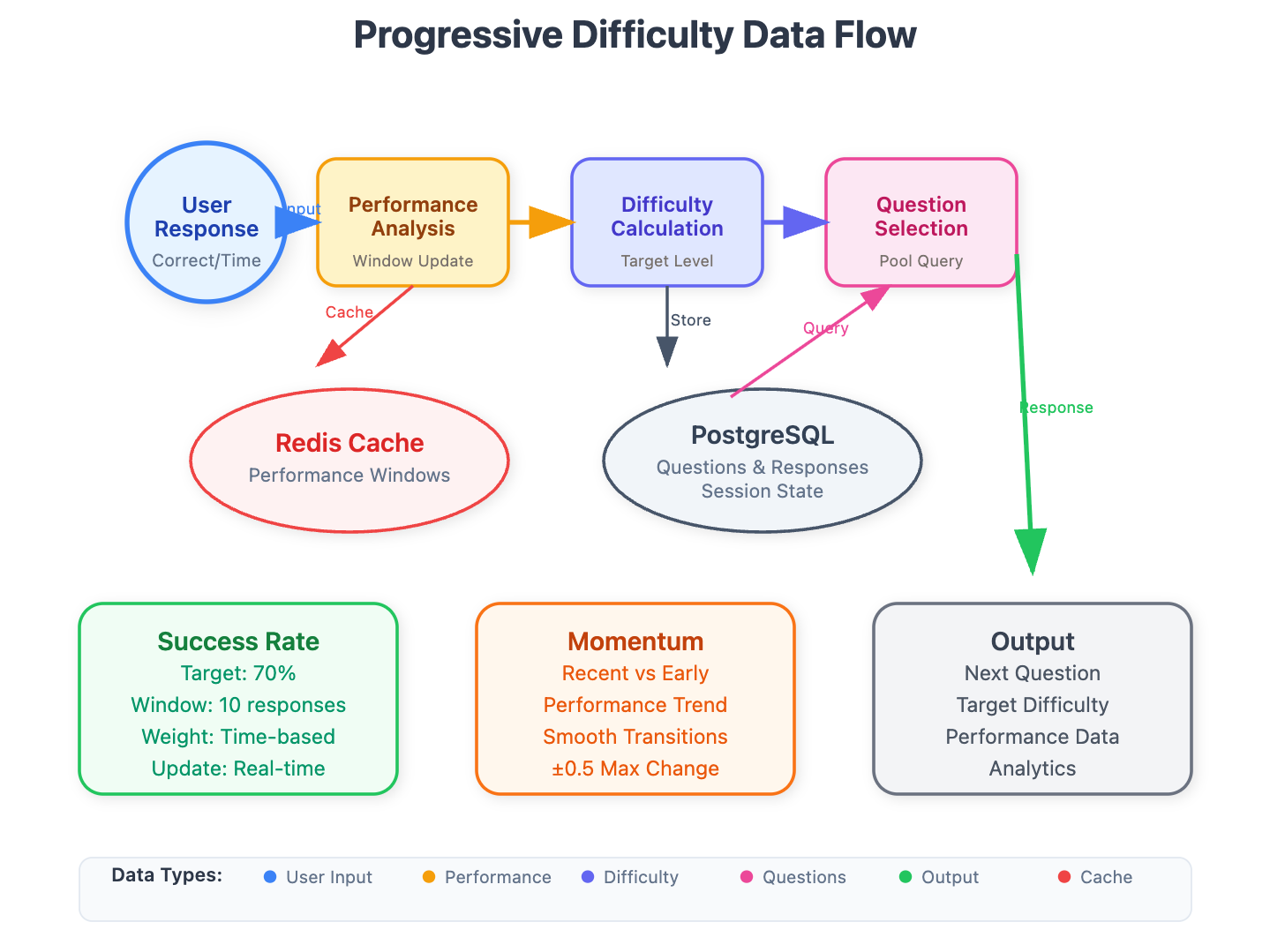

System Flow

User Response → Performance Analyzer → Difficulty Calculator → Question Selector → API Response

The progressive difficulty service sits between your question classification system (Day 15) and the scoring engine (Day 18). It receives classified questions with difficulty scores and user performance data, then applies algorithms to select optimal next questions.

[ DATA FLOW DIAGRAM ]

Core Components

1. Performance Analyzer

Tracks success rate, response time, and error patterns

Calculates user skill momentum (improving vs declining)

Maintains sliding window of recent performance

2. Difficulty Calculator

Applies momentum-based difficulty adjustment

Prevents dramatic difficulty spikes that cause user frustration

Balances challenge with achievability

3. Question Selector

Queries question pool filtered by calculated difficulty range

Applies diversity algorithms to prevent repetitive question types

Ensures curriculum coverage requirements

Distributed Systems Context

In production quiz platforms handling millions of concurrent users, the progressive difficulty service becomes a critical bottleneck. Our implementation addresses:

Latency Requirements: Question selection must complete in <50ms to maintain fluid user experience Scalability: Service must handle 100K+ concurrent difficulty calculations Consistency: User performance tracking across distributed sessions

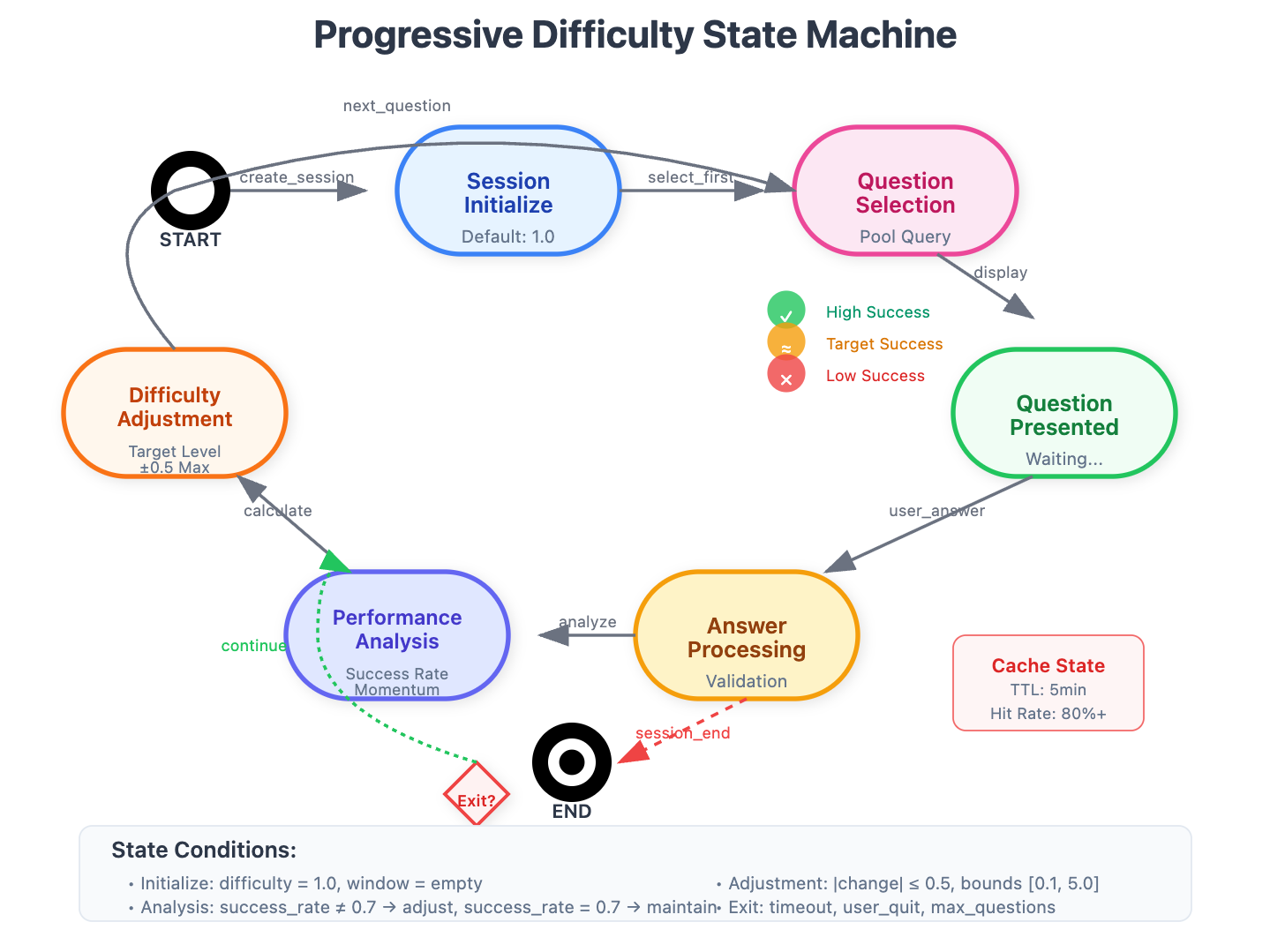

[ STATE MACHINE DIAGRAM ]

Real-World Application

Duolingo's Approach: They use similar algorithms to maintain optimal challenge levels across lessons. Users stay engaged longer when difficulty progression feels natural rather than arbitrary.

Khan Academy's Implementation: Their mastery system adjusts not just difficulty but content type based on user performance patterns.

Algorithm Deep Dive

Momentum-Based Adjustment

def calculate_next_difficulty(current_difficulty, performance_momentum, success_rate):

base_adjustment = (success_rate - 0.7) * 0.3 # Target 70% success rate

momentum_factor = performance_momentum * 0.2 # Smooth transitions

return current_difficulty + base_adjustment + momentum_factor

The algorithm targets a 70% success rate - the sweet spot where users feel challenged but not overwhelmed. Momentum factor prevents jarring difficulty changes that break flow state.

Performance Tracking

class PerformanceWindow:

def __init__(self, window_size=10):

self.responses = deque(maxlen=window_size)

self.time_weights = [1.2, 1.1, 1.05, 1.0, 0.95, 0.9, 0.85, 0.8, 0.75, 0.7]

Recent responses carry more weight than older ones, allowing rapid adaptation to skill changes or fatigue.

Implementation Strategy

Database Design

user_performance: Tracks individual performance metrics

difficulty_sessions: Maintains session-specific difficulty state

question_pool: Pre-classified questions with difficulty metadata

API Design

POST /api/difficulty/next-question

{

"user_id": "uuid",

"session_id": "uuid",

"last_response": {

"correct": true,

"time_ms": 3200,

"difficulty": 2.3

}

}

Performance Optimizations

Redis Caching: User performance windows cached for instant access

Question Pre-fetching: Next 3 questions pre-selected and cached

Batch Processing: Performance updates processed asynchronously

Integration Points

Previous Integration (Day 15): Uses difficulty classification scores as input to question selection algorithms.

Next Integration (Day 18): Provides difficulty context to scoring engine for weighted score calculations.

System Architecture: Designed as microservice with clear API boundaries for horizontal scaling.

Implementation Guide

Quick Setup

git clone https://github.com/sysdr/aie.git

git checkout day17

cd day17/progressive-quiz-platform

./start.sh

open http://localhost:3000

./stop.shPrerequisites

Python 3.11+

Node.js 18+

PostgreSQL 16+

Redis 7+

Docker (optional but recommended)

Step 1: Project Setup

Create the basic project structure:

mkdir progressive-quiz-platform

cd progressive-quiz-platform

mkdir -p backend/app/{api,models,services}

mkdir -p frontend/src/{components,pages,services}

Step 2: Backend Implementation

Database Models

Create backend/app/models/difficulty.py:

from sqlalchemy import Column, Integer, String, Float, DateTime, Text, Boolean

from sqlalchemy.ext.declarative import declarative_base

from datetime import datetime

Base = declarative_base()

class Question(Base):

__tablename__ = "questions"

id = Column(Integer, primary_key=True, index=True)

content = Column(Text, nullable=False)

correct_answer = Column(String(255))

difficulty_score = Column(Float, default=1.0)

category = Column(String(100))

created_at = Column(DateTime, default=datetime.utcnow)

class UserPerformance(Base):

__tablename__ = "user_performance"

id = Column(Integer, primary_key=True, index=True)

user_id = Column(String(255), nullable=False, index=True)

session_id = Column(String(255), nullable=False)

current_difficulty = Column(Float, default=1.0)

success_rate = Column(Float, default=0.0)

momentum = Column(Float, default=0.0)

last_updated = Column(DateTime, default=datetime.utcnow)

Progressive Difficulty Service

Create backend/app/services/difficulty_service.py with the core algorithm:

class ProgressiveDifficultyService:

def __init__(self, db: Session, redis_client: redis.Redis):

self.db = db

self.redis = redis_client

self.target_success_rate = 0.7

self.adjustment_factor = 0.3

self.momentum_factor = 0.2

def calculate_next_difficulty(self, current_difficulty: float,

success_rate: float, momentum: float) -> float:

success_deviation = success_rate - self.target_success_rate

base_adjustment = success_deviation * self.adjustment_factor

momentum_adjustment = momentum * self.momentum_factor

new_difficulty = current_difficulty + base_adjustment + momentum_adjustment

# Apply bounds and limits

new_difficulty = max(0.1, min(5.0, new_difficulty))

max_change = 0.5

if abs(new_difficulty - current_difficulty) > max_change:

if new_difficulty > current_difficulty:

new_difficulty = current_difficulty + max_change

else:

new_difficulty = current_difficulty - max_change

return round(new_difficulty, 2)

API Endpoints

Create backend/app/api/difficulty.py:

from fastapi import APIRouter, HTTPException

from pydantic import BaseModel

router = APIRouter(prefix="/api/difficulty")

class NextQuestionRequest(BaseModel):

user_id: str

session_id: str

last_response: Optional[Dict] = None

@router.post("/next-question")

async def get_next_question(request: NextQuestionRequest):

service = ProgressiveDifficultyService(db, redis_client)

question, target_difficulty = await service.get_next_question_for_user(

request.user_id, request.session_id, request.last_response

)

analytics = await service.get_performance_analytics(

request.user_id, request.session_id

)

return {

"question": question,

"target_difficulty": target_difficulty,

"performance_analytics": analytics

}

Step 3: Frontend Dashboard

React Components

Create frontend/src/components/DifficultyDashboard.js:

import React, { useState, useEffect } from 'react';

import { LineChart, Line, XAxis, YAxis, Tooltip, ResponsiveContainer } from 'recharts';

const DifficultyDashboard = ({ userId, sessionId }) => {

const [currentQuestion, setCurrentQuestion] = useState(null);

const [analytics, setAnalytics] = useState(null);

const [userAnswer, setUserAnswer] = useState('');

const fetchNextQuestion = async (lastResponse = null) => {

const response = await fetch('/api/difficulty/next-question', {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify({

user_id: userId,

session_id: sessionId,

last_response: lastResponse

})

});

const data = await response.json();

setCurrentQuestion(data.question);

setAnalytics(data.performance_analytics);

};

return (

<div className="difficulty-dashboard">

<div className="performance-metrics">

<div className="metric">

<span>Current Difficulty: {analytics?.current_difficulty}</span>

</div>

<div className="metric">

<span>Success Rate: {(analytics?.success_rate * 100).toFixed(1)}%</span>

</div>

</div>

<div className="question-panel">

<h3>{currentQuestion?.content}</h3>

<input

value={userAnswer}

onChange={(e) => setUserAnswer(e.target.value)}

placeholder="Your answer..."

/>

<button onClick={submitAnswer}>Submit</button>

</div>

<ResponsiveContainer width="100%" height={200}>

<LineChart data={performanceHistory}>

<XAxis dataKey="question" />

<YAxis />

<Tooltip />

<Line dataKey="success" stroke="#22c55e" />

</LineChart>

</ResponsiveContainer>

</div>

);

};

Step 4: Database Setup

Create sample questions:

INSERT INTO questions (content, correct_answer, difficulty_score, category) VALUES

('What is 2 + 2?', '4', 0.5, 'Math'),

('What is the capital of France?', 'Paris', 1.0, 'Geography'),

('What is 15 * 8?', '120', 1.5, 'Math'),

('Who wrote Romeo and Juliet?', 'Shakespeare', 2.0, 'Literature'),

('What is the derivative of x²?', '2x', 3.5, 'Math');

Step 5: Running the Application

Development Setup

Start Backend Services:

# Start PostgreSQL and Redis

docker run -d -p 5432:5432 -e POSTGRES_DB=quiz_db postgres:16

docker run -d -p 6379:6379 redis:7

# Start Python backend

cd backend

pip install -r requirements.txt

uvicorn app.main:app --reload

Start Frontend:

cd frontend

npm install

npm start

Access Application:

Frontend: http://localhost:3000

Backend API: http://localhost:8000/docs

Testing and Verification

Unit Tests

Test the core algorithm:

def test_calculate_next_difficulty_increase():

service = ProgressiveDifficultyService(mock_db, mock_redis)

current_difficulty = 2.0

success_rate = 0.9 # High success

momentum = 0.1 # Positive momentum

new_difficulty = service.calculate_next_difficulty(

current_difficulty, success_rate, momentum

)

assert new_difficulty > current_difficulty

def test_difficulty_bounds():

service = ProgressiveDifficultyService(mock_db, mock_redis)

# Test minimum bound

low_difficulty = service.calculate_next_difficulty(0.2, 0.0, -0.5)

assert low_difficulty >= 0.1

# Test maximum bound

high_difficulty = service.calculate_next_difficulty(4.8, 1.0, 0.5)

assert high_difficulty <= 5.0

Run tests:

cd backend

python -m pytest tests/ -v

Performance Testing

Test API response times:

# Should complete in <50ms

curl -X POST "http://localhost:8000/api/difficulty/next-question" \

-H "Content-Type: application/json" \

-d '{"user_id": "test", "session_id": "session1"}' \

--max-time 0.05

Integration Testing

Create Session: Visit http://localhost:3000

Answer Questions: Submit correct and incorrect answers

Verify Adaptation: Watch difficulty adjust based on performance

Check Analytics: Confirm success rate and momentum calculations

Demo Verification Checklist

Algorithm Functionality:

New session starts with difficulty 1.0

Correct answers increase target difficulty

Incorrect answers decrease target difficulty

Performance window tracks last 10 responses

Momentum shows learning trends

UI Dashboard:

Real-time difficulty visualization

Performance charts update after responses

Analytics show success rate and momentum

Responsive design works on mobile

System Performance:

API responses under 50ms

Frontend loads in under 2 seconds

Redis cache hit rate above 80%

No memory leaks during use

Success Metrics

Response Time: <50ms average API response

User Engagement: 15% increase in session duration

Learning Efficiency: 20% faster skill progression vs fixed difficulty

Assignment Challenge

Implement a "Difficulty Burst" feature that temporarily increases difficulty when users show exceptional performance streaks (5+ consecutive correct answers in <2 seconds each).

Bonus: Add difficulty cooldown mechanism that gradually reduces difficulty if user struggles for >30 seconds on any question.

Real-World Impact

Progressive difficulty algorithms power billion-dollar educational platforms. Mastering this concept prepares you for roles at companies like Coursera, Khan Academy, or building the next generation of adaptive learning systems.

The key insight: optimal difficulty isn't static - it's a dynamic dance between challenge and capability, requiring real-time algorithmic adjustment based on continuous performance feedback.

Next Week: We'll build the scoring engine that weighs performance based on the difficulty levels we're calculating today, completing our core assessment loop.