Day 113: Gradient Boosting Machines - Building Production-Grade Ensemble Systems

What We’ll Build Today

A complete Gradient Boosting implementation from scratch that mirrors production ensemble architectures

A fraud detection system using sequential error correction, similar to systems processing millions of transactions at PayPal and Stripe

Comprehensive performance benchmarking comparing GBM against single models to understand the 20-40% accuracy improvements seen in production

Why This Matters: The Secret Behind Modern AI Dominance

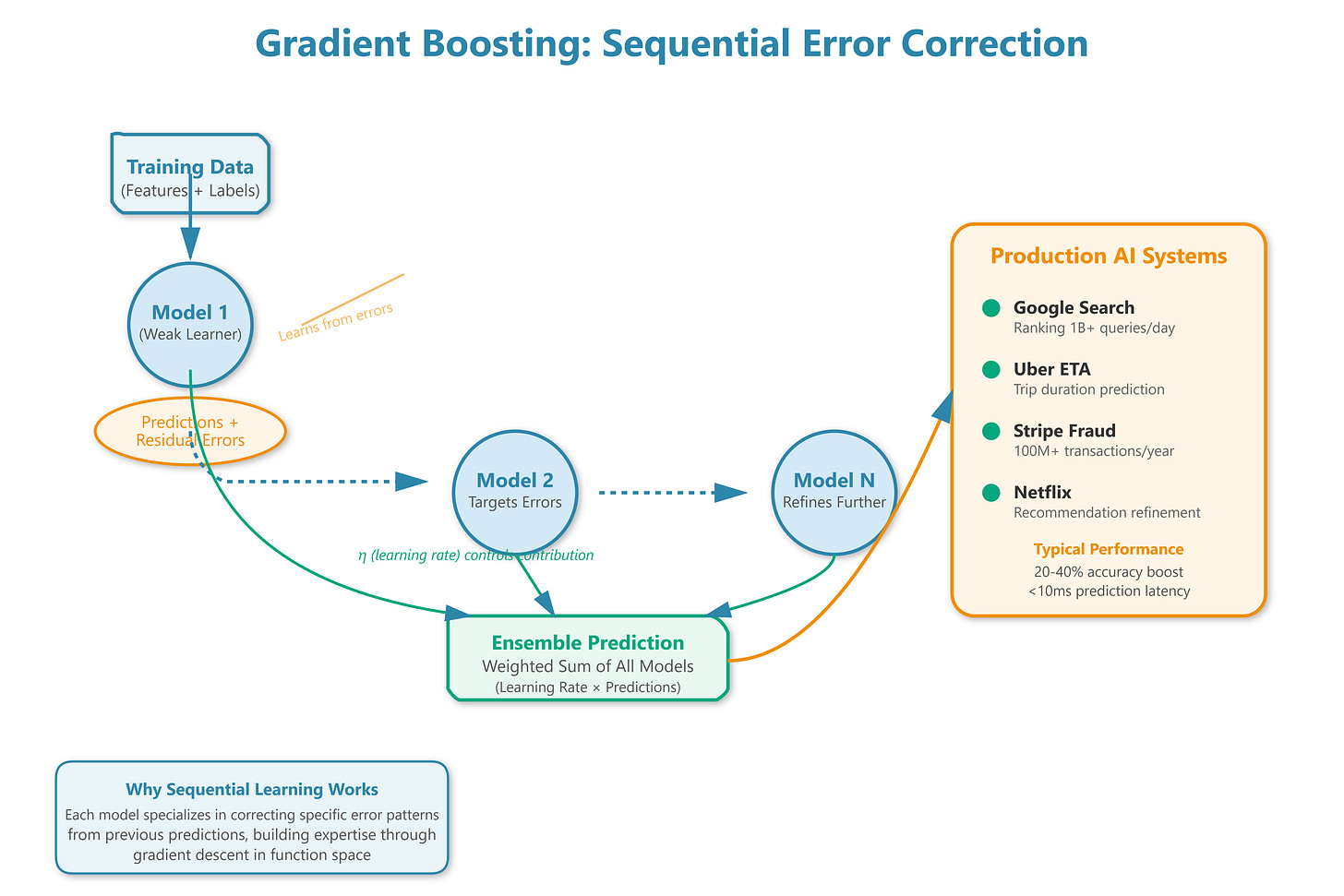

When Kaggle competitions consistently show the same winning algorithm, you pay attention. Gradient Boosting Machines dominate leaderboards not through complexity, but through a deceptively simple principle: learning from mistakes systematically. While neural networks grab headlines, GBM quietly powers the critical decision systems at Google (search ranking), Uber (ETA prediction), and virtually every major fraud detection platform processing billions of dollars in transactions.

The genius lies in sequential optimization. Instead of training one massive model hoping it captures everything, GBM builds an ensemble of weak learners where each new model specifically targets the errors of its predecessors. Think of it as a team of specialists, each expert at correcting specific types of mistakes. This architectural approach delivers exceptional accuracy on tabular data while remaining interpretable—a critical requirement when explaining why a loan was denied or a transaction flagged as fraudulent.

In production systems handling 10,000+ predictions per second, GBM’s efficiency becomes crucial. Each weak learner is typically a shallow decision tree (depth 3-6), making individual predictions microseconds-fast. The sequential architecture enables sophisticated optimization strategies impossible with single models, and the ensemble naturally provides confidence intervals through prediction variance—essential for risk-sensitive applications.